IT ticket categorization identifies what the ticket is about, while prioritization determines how quickly it should be handled based on impact and urgency. Mature IT teams use classification, category hierarchies, priority matrices, routing rules, SLAs, and asset/service context to ensure consistent triage at scale.

Why ticket triage breaks under load

Ticket backlogs in mid-market and enterprise environments don’t happen because the service desk isn’t trying hard enough. They happen because the system that decides what a ticket is (categorization) and what gets handled first (prioritization) doesn’t hold up under scale.

IT ticket triage breaks under load when categorization and prioritization are too inconsistent to support scale.

When those two layers aren’t consistent, everything downstream starts to degrade. Routing slows down, SLAs become unreliable, and reporting stops reflecting reality.

A simple scenario exposes the failure mode:

- A senior executive’s laptop fails an hour before a board meeting

- A VPN issue impacts hundreds of remote employees

- A request comes in for a replacement mouse

On paper, these are all “tickets.” Operationally, they are not the same class of problem.

But when they enter the same queue with inconsistent categories and user-defined “urgent” flags, the system stops making decisions. People do.

Triage quietly shifts from structured prioritization to:

- first-in, first-out

- escalation by hierarchy

- or whoever pushes the hardest

That’s not prioritization. That’s queue survival.

A priority matrix exists to prevent exactly this failure, not as a theoretical framework, but as a control system that enforces how work is sequenced when volume increases.

Without it, teams don’t just slow down; they become inconsistent.

Weak categorization doesn’t just slow you down; it distorts the system

At enterprise scale, categorization isn’t an administrative step. It’s a dependency for routing, SLA measurement, and reporting.

ITSM categorization matters because it shapes how tickets are routed, measured, escalated, and reported.

When categories are inconsistent or too broad:

- tickets get reassigned across teams,

- routing logic breaks down,

- SLA tracking becomes unreliable, and

- reporting loses diagnostic value

This is why most ITSM or ticketing platforms treat reassignment as a core performance metric. Reassignment isn’t just inefficiency. It’s a signal that the system failed to understand the work at the intake stage. Once that happens, everything downstream becomes reactive.

You’re no longer managing operations; you’re correcting classification errors in real time.

Mature teams don’t get better at firefighting. They reduce the need for it. They do that by structuring ticket intake so that categorization drives accurate routing, prioritization reflects real business impact, SLAs align with urgency and scope, and reporting feeds back into system improvement.

The goal isn’t faster triage; it’s predictable triage under load. Because at enterprise scale, the question isn’t whether tickets will spike. It’s about whether your system still makes the right decisions when it does.

Poor ITSM categorization makes ticket routing, SLA reporting, and problem management less reliable.

See triage in action

Classification, categorization, and prioritization: What each field controls

One of the fastest ways to create noise in a service desk is to treat classification, categorization, and prioritization as the same thing.

They’re not.

There are three separate control points. When they collapse into one, triage becomes inconsistent by design.

A cleaner way to think about them is:

- Classification decides which process owns the work

- Categorization describes what the work is about

- Prioritization determines how quickly it needs to be addressed

A simpler way to separate them is:

| Field | Question it answers | Example |

| Classification | Which ITSM process owns this? | Incident, request, change |

| Categorization | What is this work about? | Network, identity, hardware |

| Prioritization | How quickly should it be handled? | P1, P2, P3 |

Each one controls a different part of the triage process.

Confuse them, and the system loses its ability to route, sequence, and measure tickets reliably.

- Classification: Which ITSM process owns the ticket

Classification is the first decision the service desk makes.

Strong IT classification prevents incidents, service requests, changes, and major incidents from being routed through the wrong workflow.

It determines whether a ticket is routed to incident management, request fulfillment, or change management.

Get this wrong, and everything downstream, including SLAs, workflows, and approvals, operates on the wrong assumptions.

Most mature ITSM practices converge on a consistent set of definitions:

- Service request → a request for access, information, or a standard service

- Incident → an unplanned interruption or degradation in service

- Major incident → a high-impact disruption likely affecting multiple users or services

- Change → any modification that could affect service delivery

For instance, frameworks like ITIL and providers such as Amazon Web Services treat this as foundational.

Because classification doesn’t just label work, it determines which workflows are triggered, how risk is assessed, and how success is measured.

If categorization is messy, you get inefficiency. If classification is wrong, you get a process failure.

- Categorization: How the system understands the work

Once the work is in the right process, categorization takes over.

This is where the system answers: What is this actually about?

Done well, categorization enables accurate routing, meaningful reporting, trend detection, and proactive problem management.

Done poorly, it creates noise. Tickets bounce between teams. Reports flatten into “Other”, making trend analysis less useful. Root cause analysis becomes guesswork.

There’s no universal template here, and that’s the point.

Your category structure should reflect:

- How your services are delivered

- How your teams are organized

- How do you want to analyze performance

Rigid “best practice” taxonomies often fail because they don’t match the operating model.

Good categorization isn’t standardized. It’s aligned.

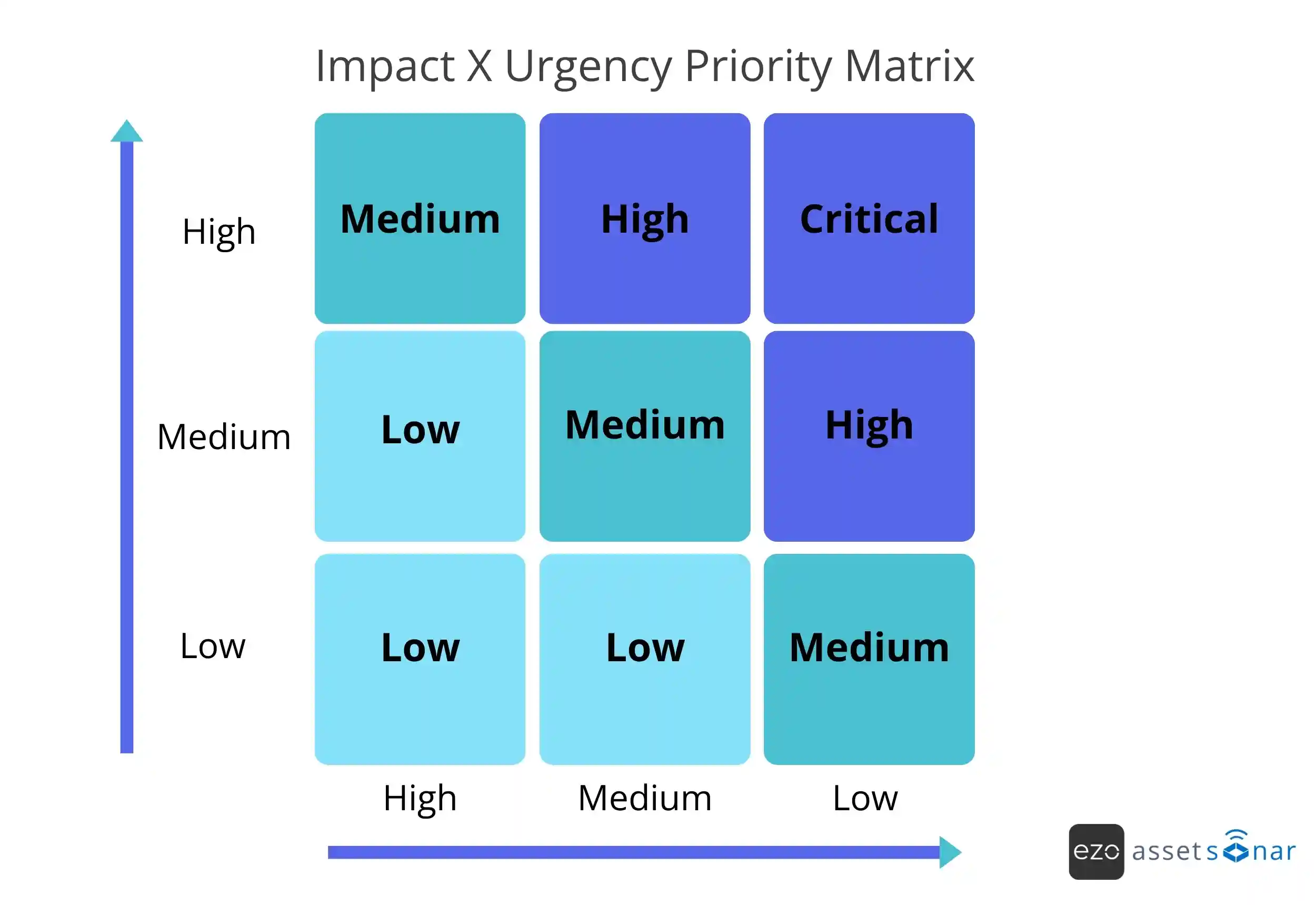

- Prioritization: Enforcing how work gets sequenced

Priority is where most systems break.

Not because teams don’t understand urgency, but because urgency is often captured as opinion instead of data.

When users can mark everything as “high”, priority stops being a signal. It becomes noise.

In a stable system, priority is not chosen; it’s derived from:

- Impact → how many users or services are affected

- Urgency → how quickly the issue needs resolution

Mapped through a predefined matrix, this removes subjectivity and ensures that:

- A VPN outage affecting hundreds outranks a single-user issue, or

- A business-critical failure is treated differently from a low-impact request

Priority isn’t about importance to the requester. It’s about the impact on the business.

The minimum data model: What your triage system actually runs on

If triage is the decision layer, the data model is what powers it.

Most breakdowns don’t happen because teams lack effort; they happen because the system lacks structure.

At mid-market to enterprise scale, a minimum viable model looks like this:

| Field | Purpose | Example |

| Classification | Routes work into the correct process | Incident, request, change, major incident |

| Category hierarchy | Provides 2-4 levels of structure for routing and trend analysis without overlifting | Network to VPN to authentication |

| Impact and urgency fields | Structures the inputs that map to priority through a predefined matrix, instead of free-text judgment | 200 users are affected (moderate-high), and the payroll deadline is today (high) |

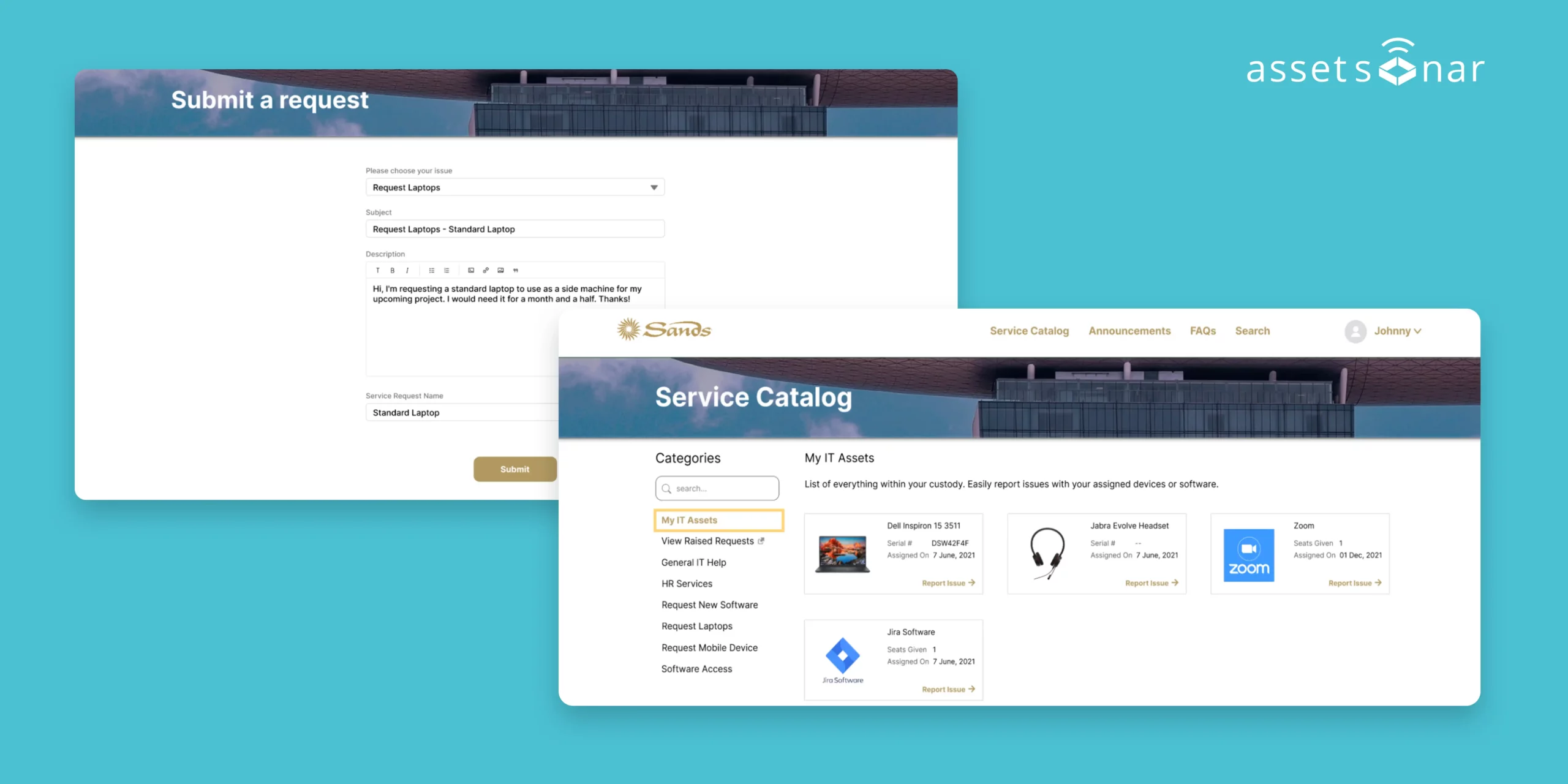

| Service/asset/CI linkage | Connects tickets to what is actually affected, enabling context and downstream analysis | VPN gateway, laptop, SaaS app |

| SLA definitions tied to priority | Ensure response and resolution targets are consistent and enforceable | P1 response within 15 minutes |

This isn’t about capturing more data. It’s about capturing the right data at the point of intake, so the system can make decisions with less manual intervention.

From queue management to system design

Most teams approach triage as a people problem: Train agents better, escalate faster, and respond quicker.

But triage failures are rarely about effort.

They’re about system design.

When classification, categorization, and prioritization are clearly defined and supported by a structured data model, triage stops being reactive. It becomes predictable.

And that’s the shift that matters.

You’re not improving how tickets are handled.

You’re designing a system that handles them correctly, even when volume spikes.

Building a categorization structure that survives real-world use

A categorization scheme isn’t valuable because it looks clean in a dropdown.

It’s valuable because it stays stable long enough to support:

- reporting

- problem management

- and continuous improvement

If it changes every few weeks or collapses into “Other”, it’s not a system. It’s a formality.

Categorization is an operational control. And like any control, it either holds under pressure or it fails when volume increases.

What mature teams actually optimize for

Strong categorization isn’t about perfect labels. It’s about making the system usable across workflows.

Three outcomes matter.

1. Consistency across processes

When categorization is consistent, it connects workflows that are usually fragmented:

- event and monitoring systems

- incident management

- request fulfilment

- problem management

This is what allows upstream systems to correctly classify issues, automatically trigger incidents, and maintain context as work moves across processes.

Without that consistency, every handoff becomes a reinterpretation.

2. Trend visibility and root cause clarity

Categorization is what turns tickets into data, and data into decisions.

Industry bodies like HDI explicitly tie categorization quality to trend analysis, root cause identification, and proactive improvement.

When categories are inconsistent:

- patterns disappear,

- recurring issues look unrelated,

- and improvement work becomes reactive.

You don’t just lose visibility. You lose the ability to prioritize what actually matters.

3. Routing efficiency and fewer handoffs

Categorization is also what determines where work goes.

When it’s working:

- tickets land with the right team the first time

- resolution time drops

- handoffs decrease

When it’s not:

- tickets bounce between teams

- ownership becomes unclear

- SLA performance degrades

Most ITSM platforms track reassignment rates for this reason.

Because every reassignment signals that the system didn’t understand the work at intake.

Designing a structure that holds up over time

Most categorization schemes don’t fail because they’re wrong.

They fail because they’re designed in isolation. Then, exposed to real-world variability.

A few design principles consistently show up in systems that last.

1. Use hierarchy, but keep it shallow enough to be usable

Multi-level categorization is necessary, but depth is a tradeoff.

Too shallow:

- You lose useful detail

- Reporting becomes generic

Too deep:

- Classification slows down

- Accuracy drops

- Agents default to guessing

In practice, a 2–4 level hierarchy is where most systems stabilize.

Enough structure to route and analyze. Not so much that it slows intake or forces agents to guess.

2. Build from real ticket data, not assumptions

The fastest way to design a broken taxonomy is to start with a blank sheet and “best practices”.

Mature teams start with reality.

They look at recent ticket volumes, common request types, and recurring incidents.

The review should help you:

- group recurring requests and incident types

- identify top ticket volume drivers

- map categories to service or team ownership

- remove categories that do not have enough volume to justify them

Then shape the top level based on what actually arrives, not what should arrive.

This grounds the system in operational truth instead of theory.

3. Keep the top level stable but not vague

Your top-level categories do most of the heavy lifting.

They need to be broad enough to stay stable over time, yet specific enough to be meaningful.

In practice, this usually converges around:

- ~10–12 high-level categories

Anything more, and you fragment reporting. Anything less, and everything collapses into ambiguity.

4. Treat “Other” as a signal, not a solution

“Other” is useful, though briefly.

It captures edge cases while the system is still stabilizing.

But if it becomes a permanent category, it’s a sign of failure.

As a practical benchmark, if more than 5-10% of tickets fall into “Other,” the category structure should be reviewed.

Mature teams review the “Other” category regularly, extract patterns, and fold them back into the structure. “Other” isn’t where tickets should mostly live. It’s where gaps in the system are exposed.

5. Put categorization under change control

Once your structure stabilizes, changing it casually breaks more than it fixes.

Because categorization isn’t just operational, it’s historical.

If you change it without control:

- Trend data becomes inconsistent

- Reporting loses continuity

- Improvement efforts lose context

In practice, that means category changes should be reviewed, justified, and implemented deliberately rather than adjusted on the fly.

6. Avoid symptom-based categories

This is one of the most common failure modes.

Categorizing by symptoms (e.g., “slow,” “not working,” “error”) creates fragmentation. The same underlying issue ends up in multiple categories, e.g.:

- “VPN slow”

- “VPN not connecting”

- “VPN intermittent”

Now you don’t have three issues. You have one issue hidden in three places.

Symptoms belong in metadata. They can be captured in short descriptions, tags, diagnostic fields, or analyst notes. Hence, categories should reflect which of the following get impacted:

- the system

- the service

- or the component

That’s what keeps data consistent and usable.

A practical structure that works in most environments

There’s no universal taxonomy, but some patterns consistently hold up.

A common approach used across many enterprise environments is technology or service-based categorization.

At the top level, these categories often look like:

- End-User Computing

- Identity & Access

- Network

- Business Applications

- Collaboration and Communication

- Mobile Device Management

This aligns categorization with ownership, reporting, and service delivery.

Guidance from organizations like HDI reinforces this idea: The “right” structure depends on how your organization operates, not on a predefined template.

Where detail actually belongs

Instead of expanding the top level endlessly, mature systems push detail lower.

- Top-level → service or domain

- Lower levels → application, component, or CI

For example: Business Applications → Salesforce → Login issue → SSO

This keeps the structure stable at the top yet flexible at the bottom. And most importantly, it keeps routing and reporting intact without overwhelming the intake process.

A rule of thumb is: if details change often, they probably belong lower in the hierarchy or in metadata, not as a top-level category.

The next step: Connecting categorization to real systems

Once categorization is stable, it stops being just a classification tool. It serves as a connector among tickets, services, assets, and configuration items. That’s where triage evolves from queue management into system-level visibility.

Because now you’re not just tracking issues; you’re understanding where and why they happen.

How to prioritize IT tickets using impact and urgency

Most service desks don’t struggle with prioritization because they lack a matrix. They struggle because priority is still being treated as a choice.

When priority is assigned based on who raised the ticket, how it’s worded, or how “urgent” it feels or sounds, the system stops being objective. Once that happens, prioritization becomes negotiation.

Impact × urgency: The prioritization signal that scales

Across ITSM frameworks and tooling, there’s a broad agreement on one thing: Priority should be derived, not selected.

Specifically, from the intersection of:

- Impact → how much of the business is affected

- urgency → how quickly the impact needs to be addressed

This is the foundation of the priority matrix used across frameworks like ITIL and ITSM tools because it solves a fundamental problem: It replaces subjective urgency with a consistent decision model.

For example, a single-user laptop issue may feel urgent to the requester, but a VPN outage affecting hundreds of employees has a higher business impact.

When implemented correctly, this does three things:

- removes first-in, first-out bias

- prevents escalation by hierarchy

- enforces consistent sequencing under pressure

Priority stops being reactive. Instead, it becomes a system output.

Defining impact and urgency so they actually mean something

“High, Medium, Low” only works if everyone agrees on what those words mean.

In most organizations, they don’t. Which is why mature teams don’t just define levels. They define them with context.

Impact is not “how important this feels”. It’s the measurable effect on the business, typically defined by:

- number of users affected

- criticality of the service

- financial or operational risk

Urgency is not “ASAP”. It’s the time window before the impact becomes unacceptable. This is typically defined by:

- business deadlines

- operational dependencies

- risk escalation over time

Organizations like the University of Alaska Anchorage explicitly document these definitions with examples.

Without shared definitions, analysts are forced to interpret intent, which doesn’t scale.

The real fix: Train the organization, not just the service desk

One of the most common failure modes is assuming prioritization is the service desk’s responsibility. It’s not.

It’s an organizational agreement.

If only analysts understand the impact and urgency, users will continue to mark everything as urgent, expectations will remain misaligned, and priority disputes will persist.

Mature teams do something different. They train the entire organization on:

- what impact means

- what urgency means

- and how priority is derived

Because the goal isn’t faster triage. It’s fewer arguments about what matters.

Furthermore, training should be integrated into the ticket intake experience. You can do the following to enforce this:

- Add examples besides the impact and urgency fields

- Use tooltips to explain priority levels

- Explain that the requester’s urgency may be adjusted based on business impact

- Train managers on when escalation is appropriate

Why “everything is urgent” is a system problem, not a user problem

Users will always try to escalate their requests. That’s rational human behavior.

The system’s job is to absorb that pressure, not reflect it.

Take this common example:

A user requests a replacement mouse and marks it “high priority.” From their perspective, that’s reasonable. From a system perspective, however, it’s low impact.

Guidance aligned with standards like ISO/IEC 20000 makes this clear: Priority should not be set by the requester when a defined matrix exists.

This is applicable because users optimize for their own urgency, whereas the system must optimize for business impact

Prioritization is not a preference. It’s a governance function.

A practical rule is to allow users to describe urgency, but derive the final priority from impact, urgency, and service context.

Major incidents: When prioritization is no longer enough

Not all high-priority tickets are equal, and this is where many service desks break down. They treat a P1 ticket and a major incident as the same thing. They’re not.

A P1 is a priority level. A major incident is a different operating mode altogether.

Cornell University describes a ‘major incident’ as:

- a disruption likely to trigger multiple related incidents

- or significantly degrade a critical service

The key distinction isn’t just severity; it’s coordination.

The difference looks like this:

| P1 ticket | Major incident |

| High-priority work item | Coordinated response mode |

| May affect one critical user or service | Usually affects multiple users, services, or business functions |

| Managed through ticket workflow | Managed through command, communication, and post-incident review |

Switching modes: From queue handling to incident command

When a major incident is declared, the system should change its behavior, not just priority.

Incident command means assigning clear roles for coordination, communication, technical response, and stakeholder updates.

This typically includes:

- a dedicated Incident Lead or command structure

- centralized communication (status updates, stakeholder alignment)

- parallel workstreams instead of linear ticket handling

- formal post-incident review and problem management

Common roles it might include:

- Incident Lead

- Communications Owner

- Technical Lead

- Stakeholder Liaison

- Scribe or Post-Incident Review owner

If this doesn’t happen, something predictable occurs: a major incident is reduced to a “high-priority ticket” sitting in a queue that is not designed to handle systemic failures.

The shift: From prioritization to controlled response

Priority matrices are essential, but they’re only part of the system. They ensure the right work gets attention first. They don’t ensure the right response when things escalate.

Mature teams understand the difference.

- Prioritization decides what gets worked next

- Major incident management decides how the organization responds

One is sequencing. The other is coordination.

Priority tells the team what to work on first. Major incident management outlines how the organization should respond when normal ticket handling is no longer sufficient. Confusing the two is how critical issues end up being treated like normal tickets, just with more urgency attached.

Routing, escalation, and SLA design: Removing guesswork from the system

Once categorization and priority are structured, most teams assume the hard part is done. It’s not.

Because even with clean inputs, the system can still fail at execution.

- Tickets land in the wrong queues

- Escalations happen too late or too often

- SLAs become theoretical instead of enforceable

This is where maturity shifts from decision-making to system behavior.

The goal isn’t better triage; it’s less manual triage.

At a workflow level, the system should connect: Classification → Category → Priority → Routing rule → SLA target → Escalation trigger

Automation doesn’t fix bad definitions; it amplifies them

There’s a common assumption that automation will clean up inconsistency. In reality, it does the opposite.

Automation scales whatever logic you give it.

If your definitions are unclear:

- Priority gets assigned inconsistently, just faster

- Routing errors happen automatically

- Escalation noise increases

Automation relies on clearly defined inputs, especially impact and urgency, which leads to a simple rule: Automation is only as reliable as the definitions it encodes.

If the inputs are unstable, the system becomes predictably wrong at scale.

1. Routing should be deterministic, not dependent on human sorting

In low-volume environments, routing can be manual. At enterprise scale, that breaks quickly.

Because every manual routing decision introduces delays, inconsistencies, and cognitive load for the service desk.

In a stable system, routing is driven by:

- Classification → which process owns the work

- Categorization → which team owns the domain

- Priority → how quickly it needs attention

When these are structured correctly, mostly routine tickets don’t need manual review before assignment. They usually arrive where they belong.

For example:

- If the category is Network + Service or VPN, route to Network Operations.

- If the category is Identity + Access + Type (i.e., Access Request), then route to the IAM. queue

- If the classification is Change, then route to the change approval workflow.

The service desk stops acting as a traffic controller and starts acting as a system monitor.

2. Escalation should be intentional, not a side effect of failure

Escalation is one of the most misunderstood parts of ITSM.

In many environments, it happens accidentally:

- Tickets sit too long → someone escalates

- The wrong team gets it → it gets passed on

- Urgency increases → escalation becomes reactive

That’s not escalation. That’s a correction.

Mature systems treat escalation as a designed path, not a fallback.

The path can include different types of escalation:

- Functional escalation: moving work to a more specialized team

- Hierarchical escalation: involving management or service owners

- SLA execution: triggering action based on time thresholds

- Major incident escalation: switching into a coordinated response mode

What scalable escalation actually looks like

Operationally, a few patterns show up consistently across high-performing teams:

- Dedicated escalation paths

Escalated work is explicitly flagged and routed into a separate queue, so it doesn’t get lost in the standard ticket flow - SLA-driven escalation triggers

Escalation is tied to time and thresholds, not perception (e.g., breach risk triggers notifications or reassignment automatically) - Visible escalation states in workflows

Statuses like “Escalated to Tier 2” or “Escalated to Tier 3” make escalation trackable and measurable

These aren’t just workflow tweaks. They’re how the system exposes where work is breaking down.

Measuring escalation: a signal, not just an outcome

Escalation frequency isn’t just an operational metric. It’s also diagnostic.

Useful escalation metrics include:

- Escalation rate by category

- Time to first escalation

- Percentage of escalation caused by misrouting or SLA breach risk

- Average number of handoffs per ticket

Robust ITSM platforms treat reassignment and handoffs as key indicators because when tickets move between teams too often, it usually points to:

- weak categorization

- unclear ownership

- or broken routing logic

Escalation should be expected. But it should also be explainable.

If it’s happening unpredictably, the system is leaking.

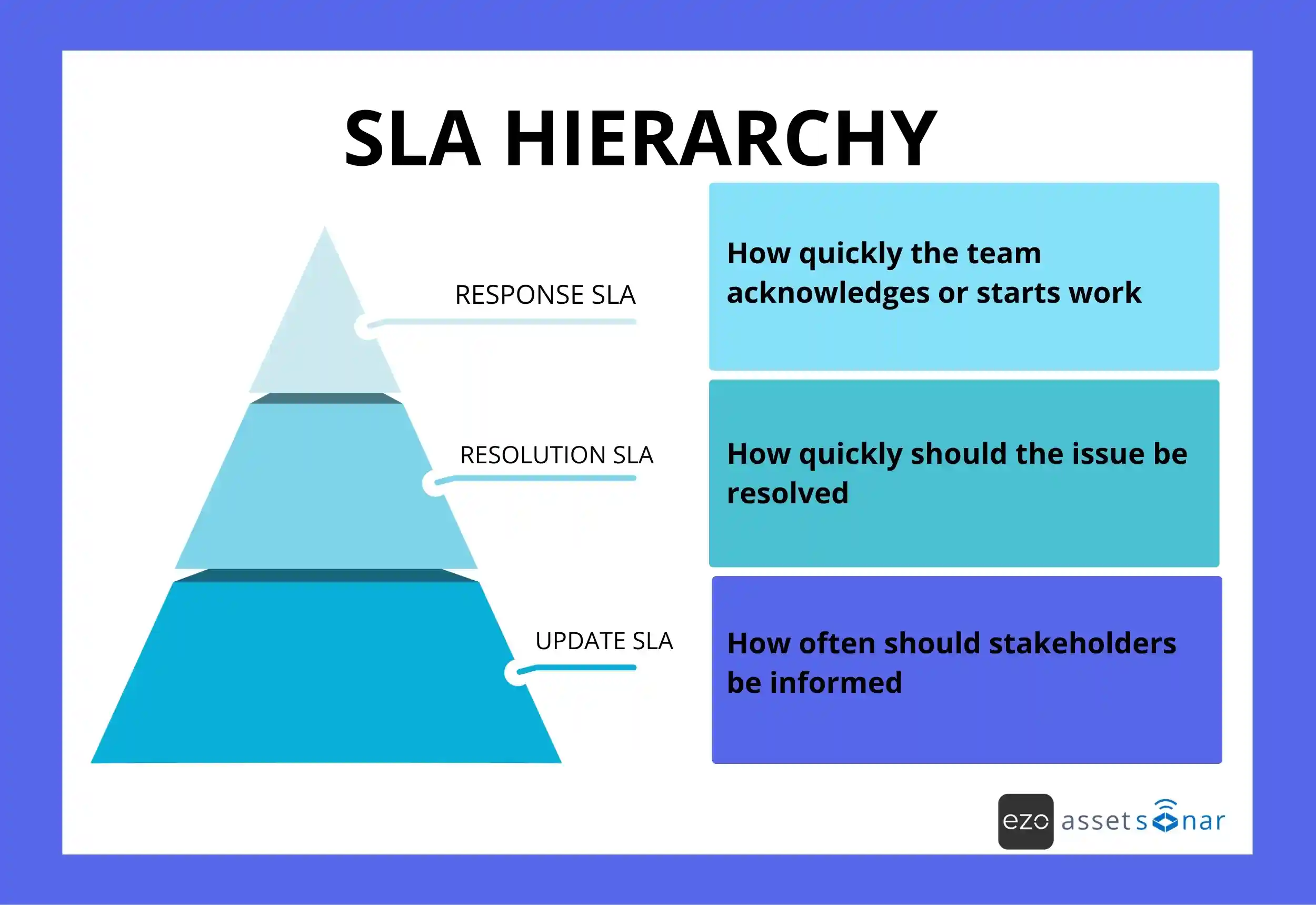

3. SLAs: Where priority becomes enforceable

Priority defines what matters. SLAs define what happens next.

Without SLAs, priority is just a label. With SLAs, it becomes a commitment.

That commitment usually comes with more than one layer:

Designing SLAs that actually hold under pressure

One of the most common mistakes is treating SLAs as static targets. In reality, they’re operational agreements, and they need to be designed with context in mind.

That context should include:

- staffing levels

- support hours

- service criticality

- escalation coverage

- vendor dependency

Guidance from organizations like the University of Alaska Anchorage reflects that:

- Response and resolution targets should be defined per priority.

- Expectations should be agreed with stakeholders.

- Definitions should evolve as real scenarios emerge.

Because there is no universal SLA model. It can differ based on your:

- service criticality

- risk tolerance

- and operational capacity

A sample SLA model might look like:

| Priority | Response target | Resolution target | Update cadence |

| P1 | 15 minutes | 4 hours | Every 30 minutes |

| P2 | 1 hour | 8 hours | Every 2 hours |

| P3 | 4 hours | 2 business days | Daily |

| P4 | 1 business day | 5 business days | As needed |

The table shows how SLA targets can be tied to ticket priority, ensuring each priority level has a clear response time, resolution expectation, and communication cadence. These values are only examples; the actual targets should reflect your team’s support hours, staffing, service criticality, and risk tolerance.

How the pieces connect

At a system level, this is how it fits together:

- Priority matrix → determines what matters first

- Routing logic → determines where work goes

- Escalation rules → determine what happens when thresholds are hit

- SLAs → define the expected timelines and outcomes

Each layer reinforces the others.

If one breaks, the system compensates, usually with manual effort.

Build cleaner workflows

What controlled ticket execution looks like

Most service desks are still designed around people making decisions, including reviewing queues, assigning tickets, and escalating manually.

That works until volume increases.

Mature teams design systems where:

- decisions are embedded in workflows,

- escalation is predictable, and

- SLAs are enforceable without constant intervention

Because the goal isn’t to respond faster. It’s to remove the need for constant decision-making in the first place.

A controlled execution model should be able to answer:

- Are routing rules automatic for routine tasks?

- Are escalation triggers defined?

- Are SLA targets tied to priority?

- Are reassignment rates tracked?

- Are ownership rules documented?

Linking tickets to assets and services: Where triage becomes system-aware

Up to this point, triage has been about structuring decisions, i.e., what the ticket is, where it goes, and how quickly it needs attention.

Linking tickets to assets, configuration items (CIs), and service changes is something more fundamental.

A CI is a component, such as an application, server, device, or service that supports IT service delivery. It gives the system context. Without context, even well-structured tickets are still isolated.

Why tickets without context slow everything down

In most environments, tickets are treated as standalone records: a description, a category, or a priority.

While that’s enough to route work, it’s not enough to understand it.

Without context:

- Helpdesk analysts have to reconstruct what’s affected

- Impact is estimated based on guesswork

- Root cause analysis starts from scratch every time

The system can process tickets. It can’t interpret them.

Useful context can include:

- affected service

- affected asset

- user department

- location

- configuration items

- linked incidents

What changes when tickets are linked to assets, services, and CIs

When tickets are connected to assets, configuration items (CIs), and services, they stop being descriptions. They become signals.

Frameworks aligned with ITIL define CIs as the components that deliver services, along with their relationships, dependencies, and history.

ITSM platforms extend this into practice by linking incidents and problems directly to those components. The result is not just a better organization. It is a faster diagnosis.

Because now the system can answer:

- What service is affected

- Which assets are involved

- Who owns them

- What dependencies might be at risk

Resolution stops being investigative. It becomes informed.

The operational shift: from tickets to evidence

This is where service desks evolve into operational systems.

Without asset and service linkage:

- Tickets are individual stories

- Patterns are hard to detect

- Impact is approximated

With linkage:

- Tickets become part of a dataset

- Recurring issues surface faster

- root cause analysis becomes structured

The evidence can include the affected CI, the related service, recent changes, prior incidents, and linked alerts.

You’re no longer asking: “What is this issue about?”

You’re asking: “Where does this issue fit in the system?”

That’s a fundamentally different level of maturity.

Why most CMDB initiatives fail and how to avoid them

At this point, many teams try to solve the problem by building a “complete” CMDB.

That’s where things usually break because the goal shifts from enabling operations to centralizing everything.

Trying to force all data into a single system creates maintenance overhead, data quality issues, and slow adoption.

Industry data often shows that only a minority of CMDB initiatives deliver meaningful value. Not because CMDBs don’t work, but because they’re scoped incorrectly.

The right boundary: Enable decisions, don’t model everything

Mature teams take a different approach. They don’t try to model the entire environment. They focus on the services that matter, the assets that support them, and the relationships needed to make decisions.

Data can stay distributed across systems as long as it’s accessible, connected, and usable at the point of triage.

The goal isn’t completeness. It’s operational relevance.

A useful rule of thumb is: if a CI relationship does not improve routing, impact assessment, escalation, or root cause analysis, it probably should not be a part of the first CMDB scope.

How does this feed back into prioritization?

This is where everything connects. Earlier, priority was defined by impact and urgency.

But impact is often guessed, until the system has context.

When tickets are linked to services and assets, impact becomes measurable:

- How many users rely on the affected service

- Which business functions are disrupted

- What dependencies are involved

For example, if a server issue affects payroll before a payroll cutoff, the impact and urgency increase. If the same server supports a non-critical test environment, priority may be lower.

Standards aligned with ISO/IEC 20000 and guidance from sources like Advisera reinforce this: Impact should be based on actual business effect, not assumptions.

And that’s only possible when the system knows what’s connected to what.

The system is coming together

At this stage, triage is no longer a front-line activity. Rather, it’s a system capability.

| Layer | What it contributes |

| Categorization | Helps tickets be understood and routed correctly |

| Prioritization | Sequences work based on impact and urgency |

| Asset/service linkage | Grounds impact assessment in real dependencies |

| Automation | Enforces decisions with less manual intervention |

Each layer builds on the previous one, resulting in a system that doesn’t just process tickets. It understands them.

Why asset-linked tickets improve operational visibility

Most service desks operate at the surface level:

- managing queues

- responding to requests

- resolving incidents

But once tickets are connected to assets and services, the role changes. You’re no longer just handling issues. You’re observing how your systems behave under stress.

And that’s where service management becomes operations management. The operational view becomes most valuable when ticket data starts driving decisions.

Turning service desk ticket data into operational insight

Once categorization and prioritization are stable, something shifts.

The service desk stops being just an intake function. It becomes a measurement system. At that point, tickets are no longer just work items. They’re structured data about how your environment behaves.

Why most service desks never reach this stage

In many organizations, reporting exists, but it doesn’t drive decisions because the underlying data is inconsistent, categories don’t align, priorities are subjective, and routing is noisy.

This means that trends can’t be trusted. If the data isn’t trusted enough, it doesn’t get used.

In practice, this often looks like:

- Reports are exported but not reviewed

- Categories are too broad to diagnose issues

- Teams debate the data instead of acting on it

- “Other” becomes one of the largest categories

- SLA reports show breaches without explaining root causes

Guidance from organizations like HDI makes this explicit: Categorization is what enables trend analysis and proactive improvement.

If it’s flawed, the system can’t tell you what to fix.

What changes when the data becomes reliable

When categorization, prioritization, and linkage are consistent, patterns start to emerge.

Not occasionally but continuously.

Various ITSM platforms position this as the foundation for problem management.

Because now you can see:

- Which categories generate the most incidents

- Which services degrade repeatedly

- Which components fail under load

And, more importantly, you can prioritize improvement work based on evidence rather than intuition.

What mature teams actually do next

At this stage, the service desk is no longer reactive.

It feeds the rest of the IT operations.

A few behaviors consistently show up.

1. They use category trends to drive problem management

Recurring issues are no longer treated as isolated incidents.

They’re grouped, analyzed, and addressed at the source. This includes identifying incidents such as repeat ticket drivers, faulty components, and chronic service degradation.

Problem management becomes targeted, or theoretical.

2. They measure and reduce routing and escalation noise

Misrouting isn’t just an annoyance. It’s a system signal.

Mature teams track how often tickets are reassigned and where escalation paths break down.

Then, they fix the underlying causes, such as:

- category gaps

- ownership ambiguity

- routing logic issues

The goal isn’t fewer escalations. Rather, it’s fewer unnecessary ones.

A monthly review should look at:

- The most reassigned categories

- categories with unclear ownership

- Escalation paths that repeat unnecessarily

- SLA breaches caused by routing delays

- Routing rules that need to be updated

3. They treat major incidents as inputs, not endpoints

In less mature environments, major incidents end when the system recovers.

In mature ones, however, that’s where the work starts.

Major incidents feed problem records, root cause analyses, and corrective actions.

Because a resolved P1 without follow-up is just a recurring incident waiting to happen.

A post-incident review should ask:

- What failed?

- Which service was affected?

- Was the impact assessment accurate?

- Were communications timely?

- Was a problem record created?

- What corrective actions will reduce recurrence?

4. They use change management to reduce future incidents

Over time, incident volume shouldn’t stay constant. It should decrease. That only happens when change is controlled.

Practices aligned with frameworks such as ITIL treat change enablement as a mechanism for assessing risk, coordinating updates, and reducing unintended disruption.

Especially when changes, incidents, and services are linked through shared systems.

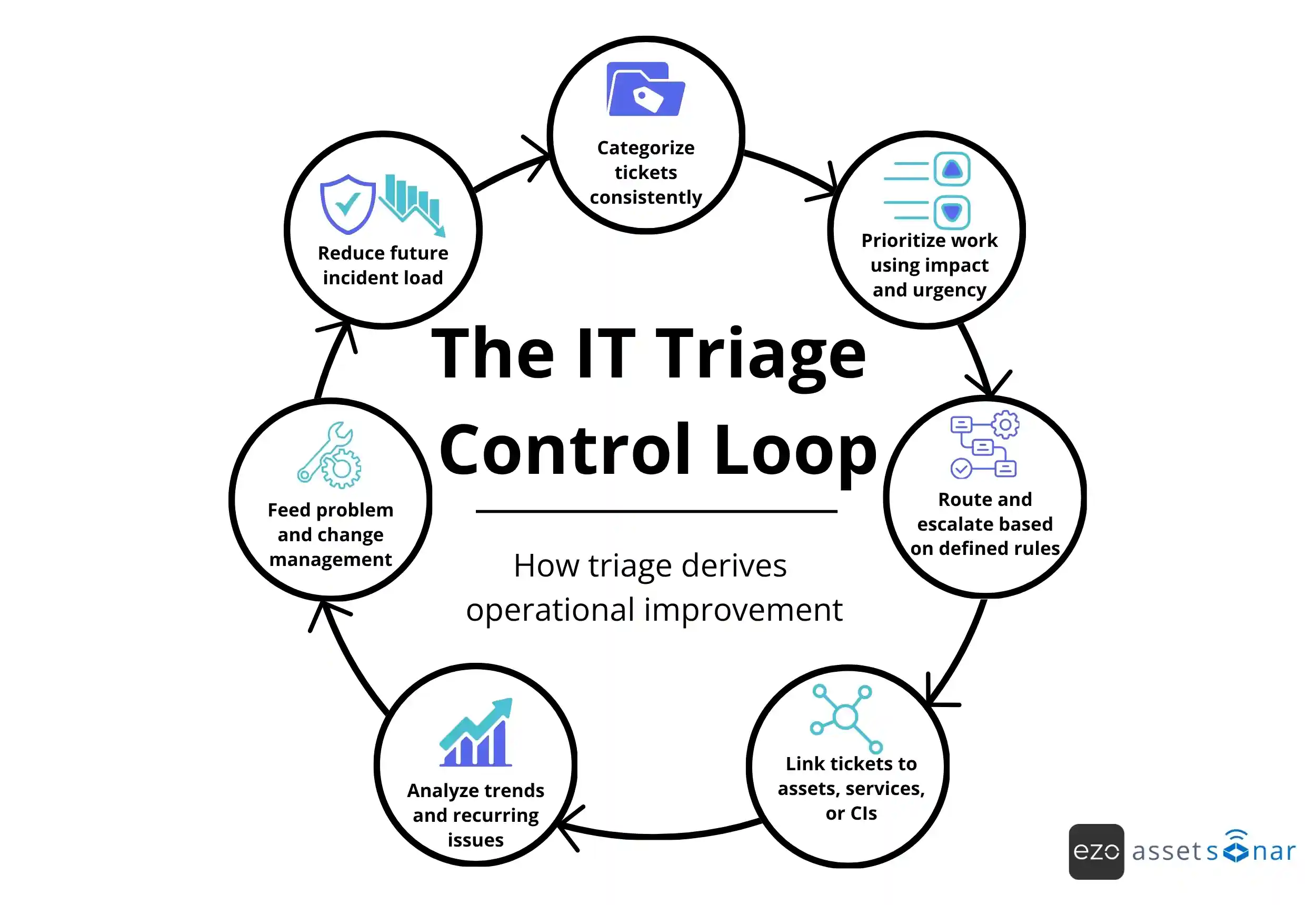

The IT triage control loop

The IT ticket triage control loop works like this:

This is no longer service desk optimization. It’s an operational improvement.

The final shift: From handling tickets to improving the system

Most teams stop at resolution. Mature teams go further.

They use tickets to answer what is failing repeatedly, where capacity is breaking down, and what needs to change upstream.

Because the goal isn’t to close tickets faster. It’s to generate fewer of them over time.

A practical self-check for your team

If you’re evaluating whether your system is operating at this level, a few questions surface quickly:

- Can you clearly explain your definitions of impact and urgency with examples, and do all teams agree on them?

- Is your categorization scheme governed, reviewed, and stable over time?

- Can high-priority tickets be linked to services, assets, or CIs to improve impact assessment and root cause analyses?

- Are priorities, routing, and escalations enforced through automation or dependent on manual triage?

If the answer to these is inconsistent, the issue isn’t effort.

It’s the design of the triage system.